-

2015-09-22

Docker Global Hack Day #3, Bangalore Edition

We organized Docker Global Hack Day at Red Hat Office on 19th Sep’15. Though there were lots RSVPs, the turn up for the event was less than expected. We started the day by showing the recording of kick-off event. The … Continue reading →

-

2015-09-10

Simulating Race Conditions

Tiering feature is introduced in Gluster 3.7 release. Geo-replication may not perform well with Tiering feature yet. Races can happen since Rebalance moves files from one brick to another brick(hot to cold and cold to hot), but the Changelog/Journal remails in old brick itself. We know there will be problems since each Geo-replication worker(per brick) processes Changelogs belonging to respective brick and sync the data independently. Sync happens as two step operation, Create entry in Slave with the GFID recorded in Changelog, then use Rsync to sync data(using GFID access)

To uncover the bugs we need to setup workload and run multiple times since issues may not happen always. But it is tedious to run multiple times with actual data. How about simulating/mocking it?

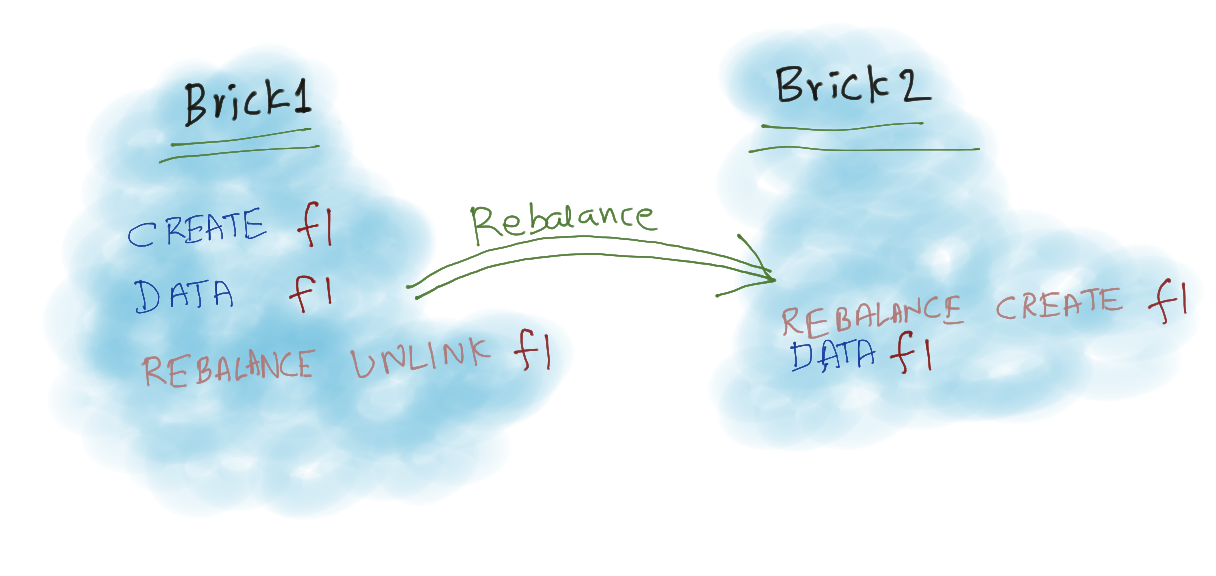

Let us consider simple case of Rebalance, A file “f1” is created in Brick1 and after some time it becomes hot and Rebalance moved it to Brick2.

In Changelog we don’t capture the Rebalance Traffic, so in respective brick changelogs will contain,

# Brick1 Changelog CREATE 0945daec-6f8c-438e-9bbf-b2ebf07543ef f1 DATA 0945daec-6f8c-438e-9bbf-b2ebf07543ef # Brick2 Changelog DATA 0945daec-6f8c-438e-9bbf-b2ebf07543ef

If Brick1 worker processes fast, then Entry is created in Slave and Data Operation succeeds. Since Both the workers can independently, sequence of execution may be like

# Possible Sequence 1 [Brick1] CREATE 0945daec-6f8c-438e-9bbf-b2ebf07543ef f1 [Brick1] DATA 0945daec-6f8c-438e-9bbf-b2ebf07543ef [Brick2] DATA 0945daec-6f8c-438e-9bbf-b2ebf07543ef # Possible Sequence 2 [Brick2] DATA 0945daec-6f8c-438e-9bbf-b2ebf07543ef [Brick1] CREATE 0945daec-6f8c-438e-9bbf-b2ebf07543ef f1 [Brick1] DATA 0945daec-6f8c-438e-9bbf-b2ebf07543ef # Possible Sequence 3 [Brick1] CREATE 0945daec-6f8c-438e-9bbf-b2ebf07543ef f1 [Brick2] DATA 0945daec-6f8c-438e-9bbf-b2ebf07543ef [Brick1] DATA 0945daec-6f8c-438e-9bbf-b2ebf07543ef

We don’t have any problems with first and last sequence, But in second sequence Rsync will try to sync data before Entry Creation and Fails.

To solve this issue, we thought if we record CREATE from Rebalance traffic then it will solve this problem. So now brick Changelogs looks like,

# Brick1 Changelog CREATE 0945daec-6f8c-438e-9bbf-b2ebf07543ef f1 DATA 0945daec-6f8c-438e-9bbf-b2ebf07543ef # Brick2 Changelog CREATE 0945daec-6f8c-438e-9bbf-b2ebf07543ef f1 DATA 0945daec-6f8c-438e-9bbf-b2ebf07543ef

and possible sequences,

# Possible Sequence 1 [Brick1] CREATE 0945daec-6f8c-438e-9bbf-b2ebf07543ef f1 [Brick1] DATA 0945daec-6f8c-438e-9bbf-b2ebf07543ef [Brick2] CREATE 0945daec-6f8c-438e-9bbf-b2ebf07543ef f1 [Brick2] DATA 0945daec-6f8c-438e-9bbf-b2ebf07543ef # Possible Sequence 2 [Brick2] CREATE 0945daec-6f8c-438e-9bbf-b2ebf07543ef f1 [Brick1] CREATE 0945daec-6f8c-438e-9bbf-b2ebf07543ef f1 [Brick1] DATA 0945daec-6f8c-438e-9bbf-b2ebf07543ef [Brick2] DATA 0945daec-6f8c-438e-9bbf-b2ebf07543ef # and many more...

We do not have that problem, second CREATE will fail with EEXIST, we ignore it since it is safe error. But will this approach solves all the problems with Rebalance? When more FOPs added, it is very difficult to visualize or guess the problem.

To mock the concurrent workload, Collect sequence from each bricks Changelog and mix both the sequences. We should make sure that order in each brick remains same after the mix.

For example,

b1 = ["A", "B", "C", "D", "E"] b2 = ["F", "G"]

While mixing b2 in b1, for first element in b2 we can randomly choose a position in b1. Let us say random position we got is 2(Index is 2), and insert “F” in index 2 of b1

# before ["A", "B", "C", "D", "E"] # after ["A", "B", "F", "C", "D", "E"]

Now, to insert “G”, we should randomly choose anywhere after “F”. Once we get the sequence, mock the FOPs and compare with expected values.

I added a gist for testing following workload, it generates multiple sequences for testing.

# f1 created in Brick1, Rebalanced to Brick2 and then Unlinked # Brick1 Changelog CREATE 0945daec-6f8c-438e-9bbf-b2ebf07543ef f1 DATA 0945daec-6f8c-438e-9bbf-b2ebf07543ef # Brick2 Changelog CREATE 0945daec-6f8c-438e-9bbf-b2ebf07543ef f1 DATA 0945daec-6f8c-438e-9bbf-b2ebf07543ef UNLINK 0945daec-6f8c-438e-9bbf-b2ebf07543ef f1

Found two bugs.

- Trying to sync data after UNLINK(Which can be handled in Geo-rep by Rsync retry)

- Empty file gets created.

I just started simulating with Tiering + Geo-replication workload, I may encounter more problems with Renames(Simple, multiple and cyclic). Will update the results soon.

I am sharing the script since it can be easily modified to work with different workloads and to test other projects/components.

Let me know if this is useful. Comments and Suggestions Welcome.

-

2015-08-18

Thanking Oh-My-Vagrant contributors for version 1.0.0

The Oh-My-Vagrant project became public about one year ago and at the time it was more of a fancy template than a robust project, but 188 commits (and counting) later, it has gotten surprisingly useful and mature. james@computer:~/code/oh-my-vagrant$ git rev-list … Continue reading →

-

2015-08-04

Gluster Community Packages

The Gluster Community currently provides GlusterFS packages for the following distributions: 3.5 3.6 3.7 Fedora 21 ¹ × × Fedora 22 × ¹ × Fedora 23 × × ¹ Fedora 24 × × ¹ RHEL/CentOS 5 × × RHEL/CentOS 6 × × × RHEL/CentOS 7 × × × Ubuntu 12.04 LTS (precise) × × Ubuntu …Read more

-

2015-07-23

Git archive with submodules and tar magic

Git submodules are actually a very beautiful thing. You might prefer the word powerful or elegant, but that’s not the point. The downside is that they are sometimes misused, so as always, use with care. I’ve used them in projects … Continue reading →

-

2015-07-19

Headed to OSCON

Once again, time for the annual trek to Portland, Oregon for OSCON — perhaps for the last time! Next year, OSCON is going to be in Austin, TX — which seems like a bit of a mistake to me. Portland and OSCON go together like milk and cookies. If you’re going to be at OSCON, […]

-

2015-04-08

GlusterFS-3.4.7 Released

The Gluster community is please to announce the release of GlusterFS-3.4.7. The GlusterFS 3.4.7 release is focused on bug fixes: 33608f5 cluster/dht: Changed log level to DEBUG 076143f protocol: Log ENODATA & ENOATTR at DEBUG in removexattr_cbk a0aa6fb build: argp-standalone, conditional build and build with gcc-5 35fdb73 api: versioned symbols in libgfapi.so for compatibility 8bc612d …Read more

-

2015-03-27

GlusterFS 3.4.7beta4 is now available for testing

The 4th beta for GlusterFS 3.4.7 is now available for testing. A handful of bugs have been fixed since the 3.4.6 release, check the references below for details. Bug reporters are encouraged to verify the fixes, and we invite others to test this beta to check for regressions. The ETA for 3.4.7 GA is tentatively …Read more

-

2015-03-17

GlusterFS 3.4.7beta2 is now available for testing

The 2nd beta for GlusterFS 3.4.7 is now available for testing. A handful of bugs have been fixed since the 3.4.6 release, check the references below for details. Bug reporters are encouraged to verify the fixes, and we invite others to test this beta to check for regressions. The ETA for 3.4.7 GA is not …Read more

-

2015-01-27

GlusterFS Office Hours at FOSDEM

Lalatendu Mohanty, Niels de Vos, and myself will be holding GlusterFS Office Hours at FOSDEM. Look for us at the CentOS booth, from 16h00 to 17h00 on Saturday, 31 January. FOSDEM is taking place this weekend, 31 Jan and 1 Feb, at ULB Solbosch Campus, Brussels. FOSDEM is a free event, no registration is necessary. …Read more

-

2015-01-27

GlusterFS 3.6.2 GA released

The release source tar file and packages for Fedora {20,21,rawhide}, RHEL/CentOS {5,6,7}, Debian {wheezy,jessie}, Pidora2014, and Raspbian wheezy are available at http://download.gluster.org/pub/gluster/glusterfs/3.6/3.6.2/ (Ubuntu packages will be available soon.) This release fixes the following bugs. Thanks to all who submitted bugs and patches and reviewed the changes. 1184191 – Cluster/DHT : Fixed crash due to null …Read more

-

2014-12-07

Configured Zabbix to keep my server cool

Recently I got myself an APC NetShelterCX mini. It is a 12U rack, with integrated fans for cooling. At the moment it is populated with some ARM boards (not rack mounted), their PDUs, a switch and (for now) one 2U server. Surprisingly, the fans of the N…

-

2014-11-17

Testing GlusterFS with very fast disks on Fedora 20

In the past I used to test with RAM-disks, provided by /dev/ram*. Gluster uses extended attributes on the filesystem, that makes is not possible to use tmpfs. While thinking about improving some of the GlusterFS regression tests, I noticed that Fedora 20 (and possibly earlier versions too) does not provide the /dev/ram* devices anymore. I could not find the needed kernel module quickly, so I decided to look into the newer zram module.

Getting zram working seems to be pretty simple. By default one /dev/zram0 is made available after loading the module. But, if needed, the module offers a parameter num_devices to create more devices. After loading the module with modprobe zram, you can do the following to create your high-performance volatile storage:

# SIZE_2GB=$(expr 1024 * 1024 * 1024 * 2)

# echo ${SIZE_2GB} > /sys/class/block/zram0/disksize

# mkfs -t xfs /dev/zram0

# mkdir /bricks/fast

# mount /dev/zram0 /bricks/fastWith this mountpoint it is now possible to create a Gluster volume:

# gluster volume create fast ${HOSTNAME}:/bricks/fast/data

# gluster volume start fastOnce done with testing, stop and delete the Gluster volume, and free the zram like this:

# umount /bricks/fast

# echo 1 > /sys/class/block/zram0/resetOf course, unloading the module with rmmod zram would free the resources too.

It is getting more important for Gluster to be prepared for very fast disks. Hardware like Fusion-io Flash drives and in future Persistent Memory/NVM will get more available in storage clouds, and of course we would like to see Gluster staying part of that!

-

2014-11-07

Some notes on libgfapi.so symbol versions in GlusterFS 3.6.1

A little bit of background—— We started to track API/ABI changes to libgfapi.so by incrementing the SO_NAME, e.g. libgfapi.so.0(.0.0). In the master branch it was incremented to to ‘7’ or libgfapi.so.7(.0.0) for the eventual glusterfs-3.7. I believe, but I’m not entirely certain¹, that we were supposed to reset this when we branched for release-3.6. Reset …Read more

-

2014-11-06

GlusterFS 3.4.6beta2 is now available for testing

Even though GlusterFS-3.6.0 was released last week, maintenance continues on the 3.4 stable series! The 2nd beta for GlusterFS 3.4.6 is now available for testing. Many bugs have been fixed since the 3.4.5 release, check the references below for details. Bug reporters are encouraged to verify the fixes, and we invite others to test this …Read more

-

2014-11-05

Installing GlusterFS 3.4.x, 3.5.x or 3.6.0 on RHEL or CentOS 6.6

With the release of RHEL-6.6 and CentOS-6.6, there are now glusterfs packages in the standard channels/repositories. Unfortunately, these are only the client-side packages (like glusterfs-fuse and glusterfs-api). Users that want to run a Gluster Server…

-

2014-11-05

GlusterFS 3.5.3beta2 is now available for testing

Even though GlusterFS 3.6.0 has been released last week, the 3.5 stable series continues to live on! The 2nd beta for GlusterFS 3.5.3 is now available for testing. Many bugs have been fixed since the 3.5.2 release, check the references below for detail…

-

2014-11-03

8th Bangalore Docker meetup and Global Hackathon#2

On 1st Nov’14, Red Hat offices in Bangalore and Pune hosted Docker meetups and Hackathon. ~40 people attended Bangalore meetup. Before the hackathon we had following presentations :- Docker Global Hackday opening by Avi Cavale, Co-founder and CEO, Shippable. Introduction to … Continue reading →

-

2014-10-23

2014 “State of Gluster” poll

As we roll towards the release of GlusterFS 3.6.0, it seemed a good time to find out what and how you are using GlusterFS. To that end, we’ve got a short poll up at https://www.surveymonkey.com/s/gluster2014, which should be pretty quick for you to take. This is completely anonymous, unless you choose to opt in to allow …Read more

-

2014-10-22

Gluster Volume Snapshot Howto

This article will provide details on how to configure GlusterFS volume to make use of Gluster Snapshot feature. As we have already discussed earlier that Gluster Volume Snapshot is based of thinly provisioned logical volume (LV). Therefore here I wi…