-

2014-03-13

Understanding GlusterFS CLI Code – Part 1

GlusterFS CLI code follows client-server architecture, we should keep that mind while trying understand the CLI framework i.e. “glusterd” acts as the server and gluster binary (i.e. /usr/sbin/gluster) acts as the client. In this write up I have taken “gluster … Continue reading →

-

2014-02-16

Running GlusterFS inside docker container

As a part of GlusterFS 3.5 testing and hackathon, I decided to put GlusterFS inside a docker container.So I installed docker on my Fedora20 desktop and then started a Fedora container Once I am inside the container I installed GlusterFS … Continue reading →

-

2014-02-11

Using GlusterFS With GlusterFS Samba vfs plugin on Fedora 20

This blog covers the steps and implementation details to use GlusterFS Samba VFS plugin. Please refer below link, If you are looking for architectural information for GlusterFS Samba VFS plugin, difference between FUSE mount vs Samba VFS plugin http://lalatendumohanty.wordpress.com/2014/04/20/glusterfs-vfs-plugin-for-samba/ I … Continue reading →

-

2014-01-27

Screencasts of Puppet-Gluster + Vagrant

I decided to record some screencasts to show how easy it is to deploy GlusterFS using Puppet-Gluster+Vagrant. You can follow along even if you don’t know anything about Puppet or Vagrant. The hardest part of this process was producing the … Continue reading →

-

2014-01-09

Configuring OpenStack Havana Cinder, Nova and Glance to run on GlusterFS

Configuring Glace, Cinder and Nova for OpenStack Havana to run on GlusterFS is actually quite simple; assuming that you’ve already got GlusterFS up and running.

So lets first look at my Gluster configuration. As you can see below, I have a Gluster volume defined for Cinder, Glance and Nova.… Read the rest

The post Configuring OpenStack Havana Cinder, Nova and Glance to run on GlusterFS appeared first on vmware admins.

-

2013-12-19

Installing GlusterFS on RHEL 6.4 for OpenStack Havana (RDO)

The OpenCompute systems are the the ideal hardware platform for distributed filesystems. Period. Why? Cheap servers with 10GB NIC’s and a boatload of locally attached cheap storage!

In preparation for deploying RedHat RDO on RHEL, the distributed filesystem I chose was GlusterFS.… Read the rest

The post Installing GlusterFS on RHEL 6.4 for OpenStack Havana (RDO) appeared first on vmware admins.

-

2013-11-27

Effective GlusterFs monitoring using hooks

Let us imagine we have a GlusterFs monitoring system which displays list of volumes and its state, to show the realtime status, monitoring app need to query the GlusterFs in regular interval to check volume status, new volumes etc. Assume if the polling interval is 5 seconds then monitoring app has to run

gluster volume infocommand ~17000 times a day!How about maintaining a state file in each node? which gets updated after every new GlusterFs event(create, delete, start, stop etc).

In this blog post I am trying to explain the possibility of creating state file and using it.

As of today GlusterFs provides following hooks, which we can use to update our state file.

create delete start stop add-brick remove-brick set

How to use hooks

GlusterFs hooks present in

/var/lib/glusterd/hooks/1directory. Following example shows sending message to all users usingwallcommand when any new GlusterFs volume is created.Create a shell script

/var/lib/glusterd/hooks/1/create/post/SNotify.bashand make it executable. Whenever a volume is created GlusterFs executes all the executable scripts present in respective hook directory(Glusterfs executes only the scripts which filename starting with ‘S’)#!/bin/bash VOL= ARGS=$(getopt -l "volname:" -name "" $@) eval set -- "$ARGS" while true; do case $1 in --volname) shift VOL=$1 ;; *) shift break ;; esac shift done wall "Gluster Volume Created: $VOL";

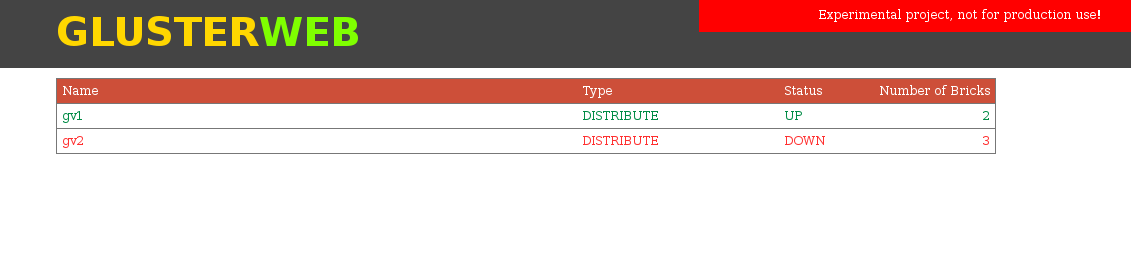

Experimental project – GlusterWeb

This experimental project maintains a sqlite database

/var/lib/glusterd/nodestate/glusternodestate.dbwhich gets updated after any GlusterFs event. For example if a GlusterFs volume is created then it updates volumes table and also bricks table.This project depends on glusterfs-tools so install both projects.

git clone https://github.com/aravindavk/glusterfs-tools.git cd glusterfs-tools sudo python setup.py install git clone https://github.com/aravindavk/glusterfs-web.git cd glusterfs-web sudo python setup.py install

By running setup, this tool will install all the hooks which are required for monitoring. (cleanup is for removing all the hooks)

sudo glusternodestate setup

All set! now run

glusterwebto start webapp.sudo glusterweb

Web application starts running in

http://localhost:8080you can change the port using--portor-poption.sudo glusterweb -p 9000

Initial version of web interface.

Future plans

Authentication: Option to provide username and password or access key while running glusterweb, For example

sudo glusterweb --username aravindavk --password somesecret # or sudo glusterweb --key secretonlyiknowMore gluster hooks support: we need more GlusterFs hooks for better monitoring(refer Problems below)

More GlusterFs features support: As a experiment UI only lists volumes, we need improved UI and support for different gluster features.

Actions support: Support for volume creation, adding/removing bricks etc.

REST api and SDK: Providing REST api for gluster operations.

Many more: Not yet planned 🙂

Problems

State file consistency: If glusterd goes down in the node then the database will have wrong details about node’s state. One workaround is to reset the database if glusterd is down using a cron job, when glusterd comes up, database will not gets updated and the database will have previous updated details. To prevent this we need a glusterfs hook for glusterd-start.

More hooks: As of today we don’t have hooks for volume down/up, brick down/up and other events. We need following hooks for effective monitoring glusterfs.(Add more if anything missing in the list)

glusterd-start peer probe peer detach volume-down volume-up brick-up brick-down

Let me know your thoughts! Thanks.

-

2013-11-26

GlusterFS Block Device Translator

Block device translator Block device translator (BD xlator) is a new translator added to GlusterFS recently which provides block backend for GlusterFS. This replaces the existing bd_map translator in GlusterFS that provided similar but very limited functionality. GlusterFS expects the underlying brick to be formatted with a POSIX compatible file system. BD xlator changes that […]

-

2013-11-26

A Gluster Block Interface – Performance and Configuration

This post shares some experiences I’ve had in simulating a block device in gluster. The block device is a file based image, which acts as the backend for the Linux SCSI target. The file resides in gluster, so enjoys gluster’s feature set. But the client only sees a block device. The Linux SCSI target presents …Read more

-

2013-11-05

Special Report: Scale Out with GlusterFS

Learn how to install, benchmark and optimize this popular, shared-nothing and scalable open-source distributed filesystem in this special 12 page report.

Please provide your email below for …

-

2013-11-03

Speaking at LISA 2013 about Puppet and GlusterFS

I’m speaking at LISA 2013, the “Large Installation System Administration” conference. This conference runs all week in Washington. I’ll be giving two talks during the week, and attending at least one BOF. My first talk is on Monday during the … Continue reading →

-

2013-10-22

Keeping your VMs from going read-only when encountering a ping-timeout in GlusterFS

GlusterFS communicates over TCP. This allows for stateful handling of file descriptors and locks. If, however, a server fails completely, kernel panic, power loss, some idiot with a reset button… the client will wait for ping-timeout (42 by the defau…

-

2013-09-24

GlusterFS as Distributed Storage in OpenStack

Slides used by me for the talk on “Distributed Storage in OpenStack” during the recent OpenStack India Day 2013 event can be found here. The presentation uses GlusterFS as an example for distributed storage and discusses integration between GlusterFS and various OpenStack components.

-

2013-09-16

GlusterFS presentation @LSPE-IN, Yahoo!

Last Saturday on 14th September’13 I gave on GlusterFS presentation at LSPE-IN. The title for the presentation was Performance Characterization in Large distributed file system with GlusterFS . Few days before the talk I looked at the attendee list to get the … Continue reading →

-

2013-09-05

Enabling Apache Hadoop on GlusterFS: glusterfs-hadoop 2.1 released

The Gluster community is pleased to announce a major update to the glusterfs-hadoop project with the release of version 2.1. The glusterfs-hadoop project provides an Apache licensed Hadoop FileSystem plugin which enables Apache Hadoop 1.x and 2.x to run directly on top of GlusterFS. This release includes a re-architected plugin which now extends existing functionality within …Read more

-

2013-09-02

Puppet-Gluster and me at Linuxcon

John Mark Walker, (from Redhat) has been kind enough to invite me to speak at the Linuxcon Gluster Workshop in New Orleans. I’ll be speaking about puppet-gluster, giving demos, and hopefully showing off some new features. I’m also looking forward … Continue reading →

-

2013-08-20

Troubleshooting QEMU-GlusterFS

As described in my previous blog post, QEMU supports talking to GlusterFS using libgfapi which is a much better way to use GlusterFS to host VM images than using the FUSE mount to access GlusterFS volumes. However due to some bugs that exist in GlusterFS-3.4, any invalid specification of GlusterFS drive on QEMU command line […]

-

2013-08-07

UNMAP/DISCARD support in QEMU-GlusterFS

In my last blog post on QEMU-GlusterFS, I described the integration of QEMU with GlusterFS using libgfapi. In this post, I give an overview of the recently added discard support to QEMU’s GlusterFS back-end and how it can be used. Newer SCSI devices support UNMAP command that is used to return the unused/freed blocks back […]

-

2013-08-01

Replacing a brick on GlusterFS 3.4.0

I retweeted the other day:

99 little bugs in the code99 little bugs in the codeTake one down, patch it around117 little bugs in the code

Looks like it happened again. In an effort to protect you from having self-heal fill up your root partition shoul…

-

2013-07-28

Feedback requested: Governance of GlusterFS project

We are in the process of formalizing the governance model of the GlusterFS project. Historically, the governance of the project has been loosely structured. This is an invitation to all of you to participate in this discussion and provide your feedback and suggestions on how we should evolve a formal model. Feedback from this thread will […]